After realizing that I’d made the database design too complicated, and eventually re-designing it, an alpha version of the assignment wizard is up and running and is available at wizard.lncd.lincoln.ac.uk. The application meets the basic functional requirements of the proposed assignment wizard, but will require further development and testing before being used properly. The main functions of the current iteration of the assignment wizard are as follows:

Applications

Evaluating ON Course APIs on OUseful.info

Over the course of our project, Dr. Tony Hirst from the Open University has helped us think through the way course data might be visualised and built upon. Recently, he’s written a series of blog posts that discuss this in more detail and offers useful feedback to the project team about our APIs. You can read Tony’s blog posts on OUseful.info

APMS -> Nucleus -> APIs. How? Why?

The Academic Programme Management System (APMS) is designed to allow read-only access to the course data through APIs. However, these APIs allow for very little (if any) search parameters to be used and as such were unsuitable for our use cases when developing applications based around course data. As such it was necessary to import this data into our own data platform, ‘Nucleus’.

Designing an ‘Assignment Wizard’

Before Christmas I was asking for people within the university for ideas about applications that could be developer for them, based around the concept of re-using course data that was already available.

After meeting with a member of staff from the School of Computer Science, we developed an idea around an ‘assignment wizard’, that would make use of the course data already available, such as awards, modules, assessments and staff.

The purpose of this application is to make the process of writing assignment documentation quicker, easier and more accurate. By tying the application in with assessment data, the assessment strategy delivered within the module will be identical to the strategy as defined in the validated module documents.

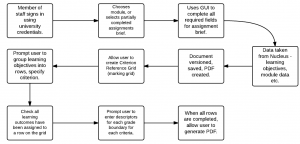

The expected flow of the applications is :

As well as reducing the amount of data that has to be entered by academics (such as learning outcomes, module details etc), the versioning and PDF generation will make the writing process more efficient. Further to this, it allows one lecturer to write a part of the assignment brief, and another to log in and complete the assignment.

As well as reducing the amount of data that has to be entered by academics (such as learning outcomes, module details etc), the versioning and PDF generation will make the writing process more efficient. Further to this, it allows one lecturer to write a part of the assignment brief, and another to log in and complete the assignment.

A follow-up blog post will show the completed application, and start to evaluate it.

Designing a Course Finder Application

Since we now have access to a very large amount of course data, it is possible to look at ways of improving the presentation of, and access to, this data for (for example) potential students. As such, I’m looking at building a prototype ‘Course Finder’ application.

Building on my work that I outlined in my previous post, we can now identify keywords for all of the courses offered at the university. This offers one way that suitable courses that can be identified for users of the application, likely to be potential students. These courses can also be linked to JACs codes, representing the subjects covered by / in the courses. Courses are also delivered at a particular level – foundation degree, bachelors degree, masters etc.

The criteria that I am currently considering using to identify potential courses for users are: subjects previously studied; subjects interested in and keywords (identified with Open Calais).

As well as using these parameters to produce search results, I have also included features within the application to record ‘click-through’ on search results, as well as the ability to ‘recommend’ a search result as being appropriate and relevant to the search parameters outlined above. As such, the application should ‘learn’ as more and more searches are carried out. If parameters A,B and C are specified and one of the courses recommended as being relevant, then the next time a search is carried out using parameters A,B and C, the same courses should be highlighted as being potentially more useful and relevant to the user.

Database Design

Most of the data required to execute the searches is available from our Academic Programme Management System, through our Nucleus data store. The data relating to the individual search instances, as well as recording click-throughs and recommendations will obviously need to be stored within the application’s database. It may also be necessary to locally store some of the data from Nucleus, in order to improve performance by essentially caching views on the data that are unlikely to change too often, such as links between keywords and courses.

Tables storing data locally include:

- keyword course links

- search instances

- search click-throughs

- search interests

- search keywords

- search studied

- search recommendations

- subjects

- similar courses

The majority of the data stored in the tables listed above relates to Coursefinder-specific functionality, some data has been ‘cached’ from Nucleus, purely to save multiple API calls for data that will change very rarely.

In a follow-up post, I’ll show the created application and describe the benefits and limitations.